Resource Plays Reshape Geophysics

By Nancy J. House

LITTLETON, CO.–Throughout its history, the evolution of petroleum geophysics arguably has been defined more by progressions in technology than market forces. That was because the basic purpose of geophysics remained unchanged for decades: reducing exploration risk and improving drilling success rates. The driving force was incremental technological improvements to achieve those core objectives. The unconventional revolution has changed that completely, expanding geophysics far beyond the boundaries of exploration into a new frontier with new types of technical challenges.

To be sure, technology continues to advance geophysical capabilities in powerful ways. However, the demands of U.S. resource plays are dictating what new capabilities are being developed and how they are being applied, which in turn, is reshaping the science of geophysics as well as the geoscientist’s job description.

In short, market forces have shifted the focus of geophysics more to reservoir development, the traditional domain of engineering. In tight oil and shale gas plays, geophysicists are being called on to solve very different types of problems than in the world of conventional reservoir exploration. They are responding admirably, leveraging sophisticated techniques to improve the economic development of tight oil and shale gas reservoirs. The requirements in these plays most certainly will continue to impact the tools and skill sets geophysicists use to get the job done.

Rock properties rule in the realm of unconventionals. Operations are focused on delineating the most “fracable” rock within target zones and optimizing well bore placement. That requires understanding subsurface drilling hazards and the orientation of natural fractures, as well as accurately estimating stress profiles, characterizing rock properties and fluids, etc. Seismic data are integral to answering all of these questions.

At the same time, the scale and pace of activities in resource plays requires much more dynamic and high-speed decision making. In a very real sense, time is money when dozens of wells are in the planning and development stage. With drilling times averaging only six or seven days per horizontal well in many plays, the geoscientist has to be able to analyze and interpret an incredible amount of information in a short time to help improve performance from one well to the next on a pad site. Technology is helping geophysicists keep up with increasing data volumes as they look at geophysical responses in a new way to answer critical reservoir development questions.

Time Value Of Geophysics

We are approaching the ideal of what geophysics can do: determining the kind of fluids in the rock, finding the rock that will best fracture under pressure, determining fraction direction and finding the sweet spots in terms of total organic content. The challenge comes in communicating how we achieve that ideal and the trade-offs involved, recognizing the time value of geophysics.

I was working as a staff geophysicist at Encana Oil & Gas during the early days of the unconventional revolution. The teams responsible for developing shale gas and tight oil formations became frustrated because of the time it took to process and analyze geophysical data. Every month, we had a meeting where all team members were expected to summarize their progress against key performance indicators. The geophysicists had to learn to put progress in terms that were meaningful to the overall development team.

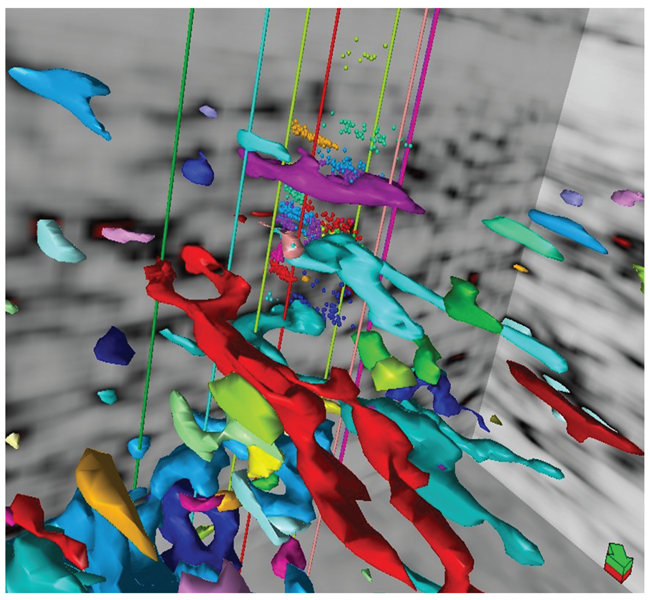

By extending amplitude variation with offset (AVO) to the prestack domain, geophysicists simultaneously can invert for compressional-wave velocity, shear-wave velocity and density. These three fundamental properties dictate elastic and inelastic rock responses, and tie directly to how well a rock will respond to hydraulic fracturing. This image shows geobodies extracted from a semblance attribute displayed with wellbores and microseismic event epicenters.

I also learned that sometimes I had to give an answer before I was completely comfortable with it. I often had to accept that an 80 percent solution was good enough at decision time. If geophysicists are not comfortable with communicating an 80 percent answer, then they are not going to have the opportunity to communicate any answer, because development will proceed without them.

Geophysicists today are still learning the new language of unconventional development. Conversations center on well placement for greater reservoir contact and potential communication with adjacent wells. It is commonplace for geologists, engineers and geophysicists to collaborate, making it imperative that geophysicists start thinking more about how to communicate the value of what we do.

It was enlightening for me to teach a basic geophysics class that included an engineer. That student in particular was thrilled to finally understand how attributes are extracted from seismic data into the reservoir model, and the importance of proper acquisition, processing and interpretation. He later forwarded a paper that had been presented at an Unconventional Resources Technology Conference describing the value of geophysics to engineering, proving that a geophysicist and engineer can learn to speak the same language!

A friend in the seismic industry has expressed frustration to me because there are beautiful datasets that are not being leveraged by operators. It is up to the geophysicist to effectively communicate how these datasets can improve drilling and completion decisions. Otherwise, even the best data and interpretations have no value.

One of the best techniques I have used to convey the value of geophysics is to think in terms of well cost. For example, in five square miles of acreage there could be 80 wells planned. Divide the cost of geophysical data and the interpretation across those 80 wells and it likely will be a slight percentage of the overall cost per well.

Taking A New Approach

Because unconventional plays fundamentally have changed the way operators approach reservoir development, geophysicists are having to take a new approach as well. I break this approach into three basic categories, increasing in complexity and effort with each subsequent category.

Finding the resource comes first. Often geologists and geophysicists just know where and how complex the resource is in a basin. They can read it from a regional standpoint with only a few 2-D lines. These lines are common, leading engineers to assume that they are always available and quickly interpreted.

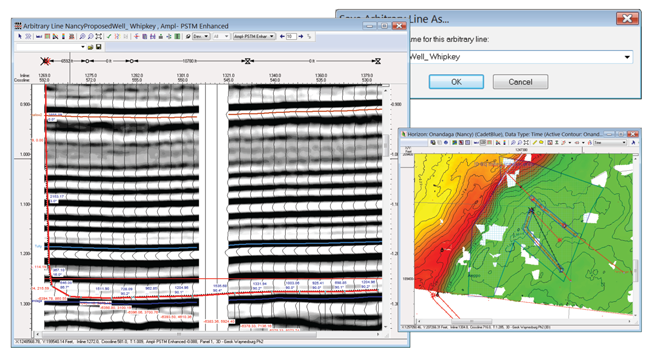

Proper subsurface imaging with 3-D seismic is fundamental to horizontal well placement. This example shows the planned kickoff angle, in-zone well path and lateral length based on seismic data interpretation for a Marcellus drilling program in southwestern Pennsylvania.

The second category has to do with optimizing reservoir contact. Here, the team is focused on better execution of the drilling program. I worked with an operator in the Marcellus Shale that managed to hold a large acreage position by drilling vertical wells. These wells told the company that the subsurface was more complex than what had been indicated by vintage shallow wells. The wells often hit repeats of the basal limestone, and when the company began drilling horizontals, it had to figure out which zone to geosteer into. There was no seismic available, often causing them to sidetrack the wells a number of times in order to locate the one of interest.

The operator had enough of these wells to determine statistically that one in six wells had to be sidetracked in order to steer into the right occurrence of the shale. Each sidetrack cost approximately $500,000. The company planned to drill 600 wells in the area, which could cost up to $50 million in sidetracks in the absence of better information.

Sidetracks could be completely eliminated if the area was properly imaged because the 3-D seismic would show the angle for kickoff and whether a well bore would encounter a fault, causing the well to be out of zone. From a pure cost-savings perspective, the operator could afford to spend $50 million to mitigate that risk, in effect, saving $50 million in sidetrack costs. That is how significantly 3-D seismic influences well drilling.

In addition, seismic analysis can increase the effective lateral length, or the total feet the well bore is actually in the zone’s sweet spot. The value of the well is based on how many thousands of feet are in zone, so this is a direct contribution to well performance. Economists and engineers on the team know the numbers, and we as a profession need to get better at communicating in those terms.

Once there is a good tie-in of rock properties from horizontals and good data from well logs, important attributes can be queried and extracted from the seismic–the third category. For example, the geophysicist can calculate which rock zone is more fracable than others. This comes from simultaneous prestack inversion of carefully designed and acquired 3-D seismic data tied into calibrated wells with core and good log suites, including crossed dipole sonic logs. These data allow extraction of compressional-wave velocity, shear-wave velocity and density from the 3-D dataset.

All of this information comes from the very far seismic traces at the very late arrival times sorted into well sampled azimuths, which not long ago were not acquired or simply discarded. The design and acquisition must be carefully planned and executed to eliminate large acquisition holes. A typical recording path (the part of a seismic survey that is actively listening to a specific source point) is on the order of 12-15 miles and may incorporate as many as 10,000 channels of data. Years ago, contractors might have that many channels available in the company; now it is necessary to have three times that many available and on the ground so you can enable efficient acquisition.

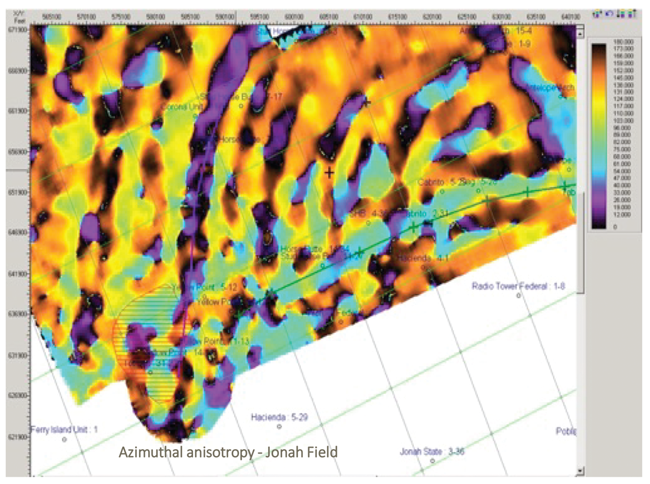

Improved computing power and processing techniques have enabled us to use this information for greater insights. Now it is possible to calculate fracture intensity from long offsets represented equally in all directions of the seismic survey. We use velocities to determine fast and slow directions, which are parallel and perpendicular to fractures, respectively. This pinpointing of the most fracable rock is made possible by long offset data acquisition, processing and interpretation.

Acquiring ‘Good’ Data

The most expensive data an operator will ever acquire are bad data. The best well plan begins with data acquired through seismic and wellbore data collection. Surveys must be designed to capture far offsets in order to create a complete model of offsets and azimuths. Shortcutting the process will jeopardize the ability to model fracture intensity and extract usable rock properties.

New survey technology also helps stabilize acquisition holes. These holes typically disturb data around them and prevent accurate processing. They are analogous to black holes in space that distort light signals around them, or window screen holes that allow flies to enter a home. Five-dimensional interpolation has proven helpful in stabilizing the data around acquisition holes, allowing processing to continue.

Simultaneous sources also have enhanced data acquisition by providing multiple offsets for every position. During processing, the sources are sorted out. Think of it as being able to identify the voice of a single student in a classroom where everyone is talking at once. Yes!

For land data, 3-D acquisition equipment has gotten a lot smaller and more portable. Drones can now harvest data. It used to be that the nodes themselves would have to be moved to a central area for harvesting and recharging. Surveys can be much more efficient and less environmentally invasive today. The nodes are small, the drones are fast, and best of all, geophysicists get to start seeing data sooner. Light detection and ranging (LIDAR) also is gaining traction in data acquisition and other surface and canopy mapping.

This image shows 3-D seismic azimuthal processing to highlight fractured areas within the giant Jonah Field in the Green River Basin. The data contain long and complete offsets sorted into azimuthal directions both parallel to fractures (fast) and perpendicular to fractures (slow).

Microseismic is a beautiful technology that goes back to the fundamental principles of locating the epicenters of earthquakes. It is a wonderful application of geophysics and another challenge for geophysicists who want to be more technically robust, while at the same time provide information to engineers who need to make quick decisions.

Some microseismic relies on imaging theory, with recording done at the surface and images built from queries. Others place an array down the borehole that listens and locates events. Both are used to measure completion orientation and effectiveness.

North American hydrocarbon basins have been carpeted by high-quality data for unconventional resources over the past few decades. These datasets are sitting in libraries owned by data suppliers and are still good. It is a valuable asset that should be leveraged by companies large and small. Creative licensing is allowing smaller operators to gain access to the data they need.

Man And Machine

New acquisition methods such as full-sampling azimuths dramatically are increasing the data volume collected. Thankfully, computing advances have allowed us to keep up. There also is a push for processing data using machine learning to automate and speed processing tasks. Of course, that requires a deep knowledge of basic geophysical principles to be able to quantify results of machine learning and adapt them to different problems. In my experience, there is an intuitive component that pursues funny little things that may end up leading to great insight. Much has yet to be done in this promising frontier.

A friend was involved in a field processing program, shooting 2-D along the line. There was always a short delay in first arrivals, which was confounding. The client has employed them as filed processors, allowing first-hand observation of operations. While in the field, he noted that the shots were not exactly along the survey line. This might have shown up on the observer reports, but not understanding how the “uphole times” (time for the buried shot to be recorded on the surface) were used, it might not seem important. Because it was not noted anywhere, this discrepancy had not been compensated for in the processing.

Attention to detail is required even in the face of massive data and pressure to produce more in less time with less investment. Human curiosity and intuition are hard to replace with a machine.

At one point in my career, I was part of a team that was recording microseismic and vertical seismic profiling data. It occurred to us that there might be a change in rock properties. Friends at Massachusetts Institute of Technology were looking at modeling fractures in rock. Their models had discrete zones of fractured rock inside of unfractured host rock with the same properties. This is exactly like a hydraulic fracture. We wondered if there was perhaps 5 percent of total gross body fractured in these little discrete tubes, if the result would be measurable.

The client allowed us to send the time lapse 3-D VSP data to the MIT researchers, who came up with really profound results. Not only was the reflection from a fractured zone of rock similar in form to an event generated by fracturing the rock, they found that the modeling could identify the difference between an open and closed fracture by the length and shape of the wavelet coda.

Geophysics has been at the forefront of artificial intelligence, neural networks and machine learning. There is simply too much data for geophysicists to digest. That does not, however, mean that geophysicists will be replaced. Instead, they will be teachers and interpreters at a higher level.

Geoscientists always want the best, most expensive and newest imaging data. But a lot of oil and gas reserves are found using lower-quality images, and even the best images still have uncertainties that cannot be removed by anything but diligent, thorough interpretation. We can get more certain of the shape, but still have to calibrate that to known well information that has been integrated carefully into the seismic data.

Over the past 20 years, computers have become much more powerful and less expensive, making it possible to do multiple-shot processing and complex statistical computations. The first Society of Exploration Geophysicists Advanced Modeling Program (SEAM) model, a partnership between industry and SEG designed to advance geophysical science and technology through subsurface models and synthetic datasets, took 30 geophysicists and geologists to come up with a subsalt model and months of time on a government computer to calculate the traces. Once an answer was found to the imaging problem, then interpreters were able to build on that.

Most imaging problems continue to have lots of uncertainty, but computing power combined with good science is enabling far more advanced solutions to reduce uncertainty in much shorter cycle times.

Expanding Horizons

SEG recently has undertaken an effort to help define how geoscientists can move from graduates to qualified professionals in a rigorously defined manner. Essential nontechnical skills once were included in the first five years of work at major and large independent companies, but this training has diminished over the past few decades. In addition to a proposed revamp of the continuing education portfolio SEG offers to more closely correlate with skills inventories and job tasks identified by a panel of industry experts to complete the passage from graduated student to qualified professional, SEG’s proposed Leadership, Diversity and Talent program will combine career development skills and experiences where young geoscientists work with a mentor on a technical program, preparing presentations, risk analysis and petroleum economics.

Geoscientists without Borders is another important SEG initiative. The program began in 2006 after a devastating tsunami triggered by an earthquake in the Indian Ocean, seeking to provide better early warnings in the future. Geoscientists without Borders figured out the technical aspects very quickly, but communicating the science was more difficult. The team ended up with a simple rule of 20s. If an earthquake shakes the ground for more than 20 seconds, one has 20 minutes to get to an elevation 20 meters higher. This simple message, posted on signs in the affected areas, may save thousands of lives in the future.

Years ago, I was part of a geophysical field project in the San Luis Valley in Colorado. It was one of those deep mountain basins, with a water table that goes out all the way to a few inches from the surface. I remember my professor noting that there would come a time when water would be more valuable than oil. I am proud to be serving as SEG president at a time when our members are actively involved in locating water supplies and other humanitarian projects around the world. It is gratifying to have an opportunity to give voice to the incredible value of geophysics to the energy industry and beyond for years to come.

Nancy J. House is principal scientist at Integrated Geophysical Interpretation in Littleton, Co., and president of the Society of Exploration Geophysicists. She is a former president of the Denver Geophysical Society. House began her 39-year career with Exxon as an exploration geophysicist. She subsequently served in senior geophysical, geophysical advisory/consulting and project management roles at major and independent companies, including Phillips Petroleum, Mobil, Repsol YPF, Twin Arrow Petroleum, Double Eagle Petroleum, Encana, Chevron and EXCO Resources. She has worked on geophysical projects all over the world, including the U.S. onshore and offshore, South America and Africa. House holds a B.S. in geology/geophysics from the University of Wyoming and master’s degrees in geophysics and seismology from Colorado School of Mines.

For other great articles about exploration, drilling, completions and production, subscribe to The American Oil & Gas Reporter and bookmark www.aogr.com.